Memoli, G., Chisari, L., Eccles, J. P., Caleap, M., Drinkwater, B. W., & Subramanian, S. (2019). VARI-SOUND. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems – CHI ’19 (pp. 1–14). New York, New York, USA: ACM Press.

https://doi.org/10.1145/3290605.3300713

Abstract

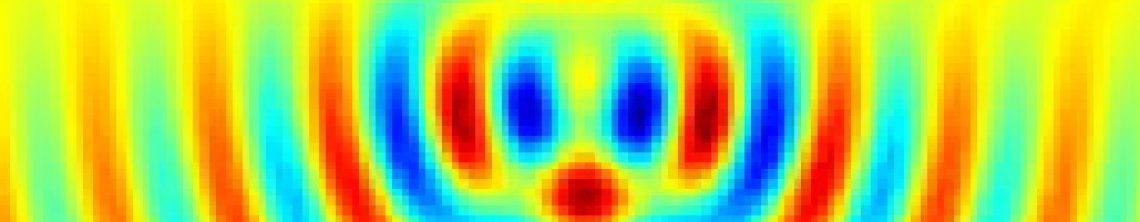

Centuries of development in optics have given us passive devices (i.e. lenses, mirrors and filters) to enrich audience immersivity with light effects, but there is nothing similar for sound. Beam-forming in concert halls and outdoor gigs still requires a large number of speakers, while headphones are still the state-of-the-art for personalized audio immersivity in VR. In this work, we show how 3D printed acoustic metasurfaces, assembled into the equivalent of optical systems, may offer a different solution. We demonstrate how to build them and how to use simple design tools, like the thin-lens equation, also for sound. We present some key acoustic devices, like a “collimator”, to transform a standard computer speaker into an acoustic “spotlight”; and a “magnifying glass”, to create sound sources coming from distinct locations than the speaker itself. Finally, we demonstrate an acoustic varifocal lens, discussing applications equivalent to

auto-focus cameras and VR headsets and the limitations of the technology.

Video